When setting up a new application or platform, one of the most important things that you will need is messaging. As every part of the platform has a certain need for data, either realtime or after the fact, messages from other services within the application boundary need to be processed as efficiently as possible. This inter-executable messaging can go from simple notifications about something that happened in a domain (‘customer X changed his name to Y’) to a queue of outstanding jobs that are to be executed by workers. In any architectural case, be it (distributed) monolith, microliths, microservices or anything in between, messages will be there.

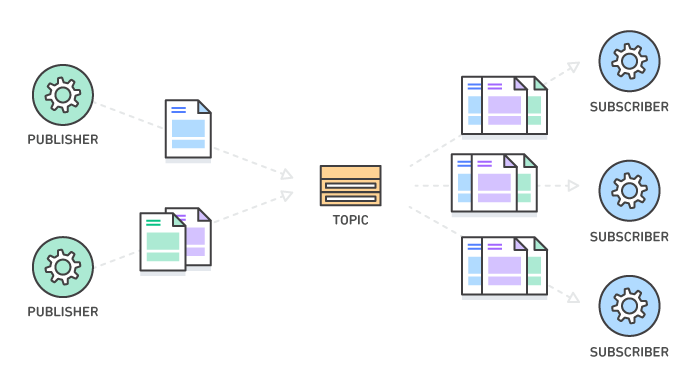

Pub-Sub (publish-subscribe) messaging is an asynchronous, decoupled style of messaging. The side where the message originates has no notion of any of the recipients, while the recipients do not know where the message came from. Pub-sub can help you to build a more resilient application, as you can change either the producing or subscribing side without any impact to the message flow. You could also scale both sides independently.

Pub-Sub messaging. Image from AWS website (AWS pub-sub-messaging)

The AWS messaging candy shop

As our platform is run on AWS, we’ve been trying to use more and more of the services you get (almost) for free out of the box. One of these is Kinesis, which served as an inter-service message bus where messages would be published and consumed by any interested party. Using Kinesis saved us from having to run and manage our own Kafka cluster and did not force us to choose a specific language or framework like some others do. We were on AWS anyway, so that buy-in had already been taken. After a while though, it showed that it lacked some of the properties that we needed for our applications. Most annoyingly, it is hard to debug or replay a single message. In Kinesis, you can replay messages by setting the tracker to a specific message ID. While this gets the job done, it also replays any of the messages that were sent after the one that you wanted to replay. Naturally, the application should be able to deduplicate any of the messages but sometimes it can be tough or costly to make it fully resilient to double delivery. So we looked for alternatives.

We had been using Amazon’s Simple Queue Service (SQS) for the last few weeks, to drive some of the more 1-to-1 asynchronous communication between several services. Unlike the streams of Kinesis or Kafka, where the messages are sharded, a queue is a simpler abstraction that can form a natural ‘inbox’ for a service. Especially for low priority messaging or processes where the user is not waiting for a response, the queue is at its best. Using SQS queues makes it possible to configure a dead letter queue, which is where the messages will end up if they could not be consumed for whatever reason. That is the hook you can use to debug and replay messages for only the consumers that failed. The down side of plain queues is that producers of messages need to know where to route them, so you lose a lot of the advantages of decoupling mentioned earlier.

In comes the AWS Simple Notification Service (SNS), our hero of the day. As the name suggests, it is used to send notifications. As the name does not suggest, at first glance, is that these notifications can also be messages on SQS queues. Do you see the pattern emerging? Decoupled messaging through SNS topics that are linked to the inboxes of the services that need those specific messages! Feels a bit like the best of both worlds.

SNS + SQS = 🚀

Alright, let’s try to set it up. I’ve created a repository (available here) with the CloudFormation templates to set up an SNS topic that is connected to one or more SQS queues that will also be created. That connection is called a subscription. To make the stack a bit more manageable and because CloudFormation does not support looping natively, I’ve used Sceptre’s Jinja2 templating in the templates/sns-pubsub.j2 file. You don’t need to make any changes there. If you do not want to deploy in eu-west-1, you need to change config/messaging/config.yaml accordingly. Also, let’s have a look at config/messaging/sns-pubsub.yaml:

When you’re done editing, you can deploy the stack to AWS (this assumes you have Sceptre installed and valid credentials):

This will create the SNS topic and the SQS queues. It also sets up a queue policy so the SNS topic gets the rights to send messages to the queues. If you decide that you need more or less queues at a later point in time, you can just add them to or delete them from the array and use the same command to update the stack.

Let’s send a message on the SNS topic:

Replace YOUR_ARN_HERE with the ARN of the created topic. You can find it in the SNS service in the AWS management console. This should return a simple JSON response with a message ID, if it succeeded.

If you now log in to the AWS management console and go to SQS, you should see the queues you created and that there is one message available in every queue. If you click a queue, you can select View/Delete Messages under Queue Actions. Click the blue Start Polling for Messages and your message should appear. My payload was as follows:

Awesome! As a final side note, when you want to use this in real life, make sure that the producing side has the right roles or permissions to send notifications on the topic. Similarly, make sure the consuming side has the permissions to read from the queue.