The evolution of computer networking has gone through major phases in the last decades. New technology, innovation and importance, but also new delivery and consumption models were key drivers in this evolution. In the early days of IT computers were hardly connected. Later one realized that data exchange over networks was way more effective than using offline media like paper, floppy disks and tapes. By that time we’ve started to use local area networks. Most of us will remember the days with token ring networks. Quickly that was extended over multiple buildings, branch offices and so on. Then one realized that centralization of data was important, so in-house data centers were introduced. Data and applications hosted on servers at the other side of the office’s wall. Sitting next to the data had some clear benefits, the latency was (relative) low, bandwidth was cheap and capacity was sufficient. Connectivity to the Internet and partners on the other hand was very limited. Sharing a 64 kbit/s connection with hundreds of employees was pretty common.In the next stage of evolution data centers were outsourced to external companies. Servers were moved from the in-house data center to dedicated data centers, providing much more flexibility when it comes to connectivity, power, and cooling. The first limitations became visible. There was simply not enough bandwidth available (or it was too expensive) and the latency was way too high to separate the application and data. So, companies switched to virtual desktops, reducing the proximity between data and applications. Security wise this was also thought as a secure architecture, since the border of the datacenter was guarded by firewalls.

In the past years public cloud made impressive inroads in IT architecture. This solved a lot of problems, such as no more capacity guessing, broad connectivity, low investments and the ability to focus on core business, for example. At the same time the entire landscape complexity increased tremendously. Looking at today’s landscape, the range of different cloud services used in an architecture is increasing rapidly. Try to make a list of all the services used by your company and you probably will lose count. Imagine how this is for the (network) architecture. Applications are now built on a mix of microservices (containers, serverless, PaaS services, etc.) and not always hosted in near proximity. For example, your identity provider most likely is not hosted in the same environment as the microservices that are used to run the application. Connectivity between users and applications or even between microservices in the same application is more and more based on using the Internet.

This introduced a number of challenges, especially on performance, security and manageability of the platform. Taking security as an example, a zero-trust networking architecture requires a completely different approach than hiding your crown jewels behind a ‘secure’ firewall. Especially now that a lot of people are working from home and that we are using multiple devices (laptops, tablets, mobile phones) to connect our applications. A less visible trend is that more devices are getting connected to your environment, think of cars, lightning, and printers for example. The influence of the broadly available connectivity is visible everywhere, but at times a big challenge for networking experts.

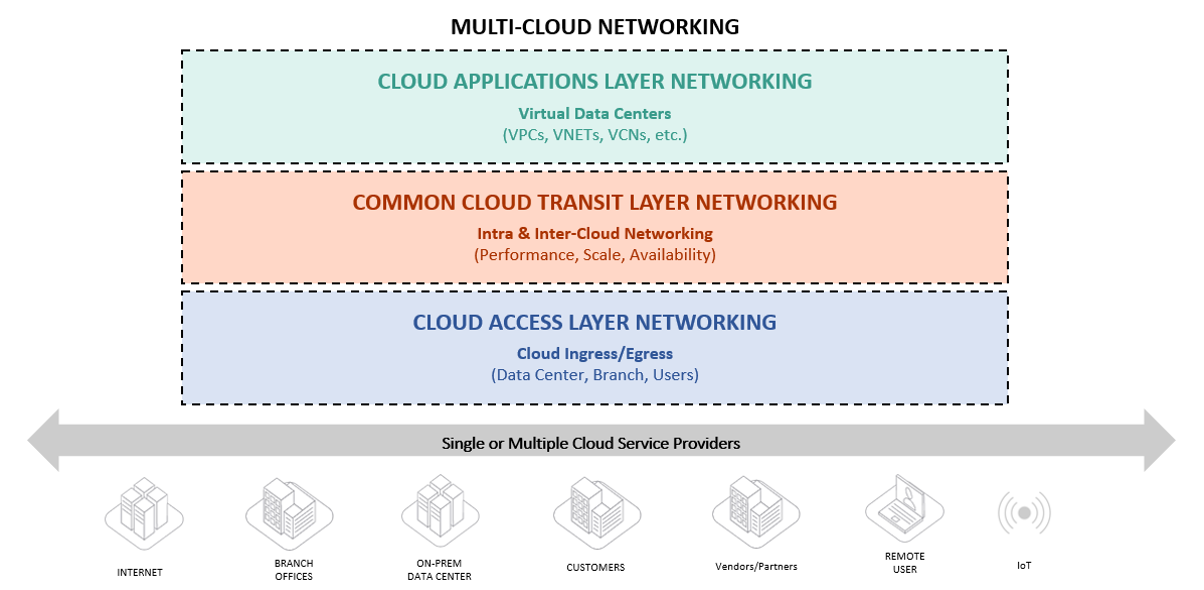

To make the challenges more tangible and to address the changing network and application landscape in the cloud and create a scalable environment, a strong architectural approach is required. The cloud connectivity problem can be divided into 3 layers.

Written by

Steyn Huizinga

As a cloud evangelist at Oblivion, my passion is to share knowledge and enable, embrace and accelerate the adoption of public cloud. In my personal life I’m a dad and a horrible sim racer.

Our Ideas

Explore More Blogs

Contact