When I am not thinking about technology and the cloud, I am busy cooking. When trying new things, a clear recipe often helps me to achieve the desired result: a well-constructed and tasty meal. Coincidentally, this applies to embracing new strategies in the cloud as well, and this is especially the case for FinOps.

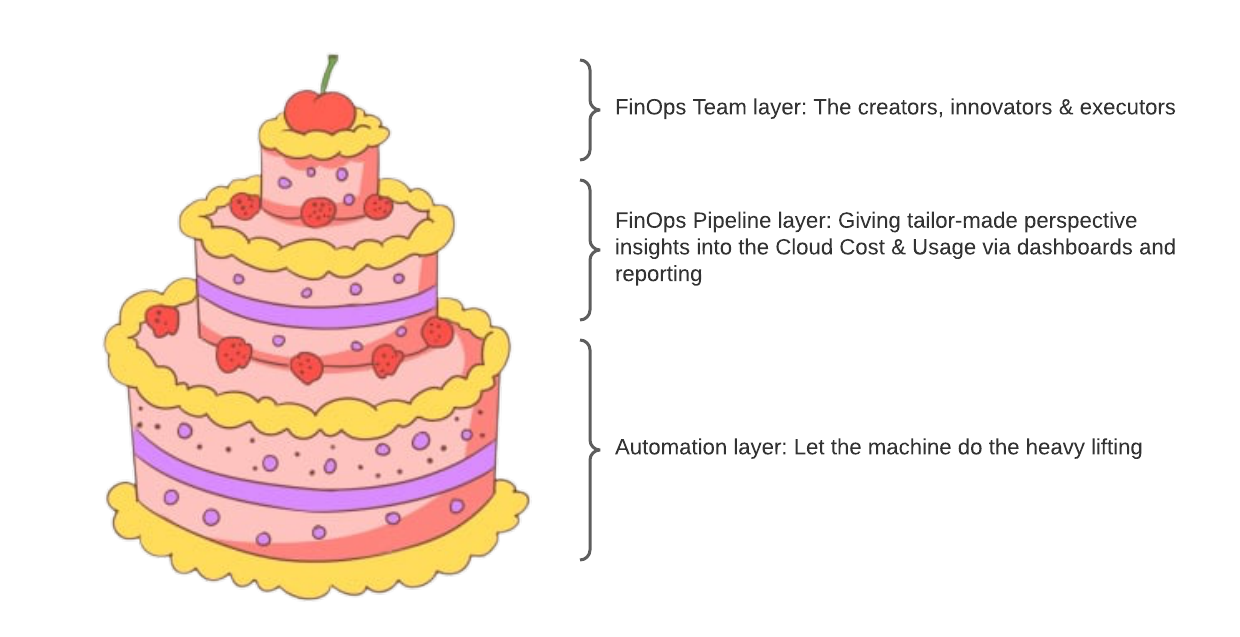

For those who want to better understand the FinOps methodology, I would like to propose and envision a well-constructed FinOps model as a recipe for a three layered cake with cherries on top. If you follow this recipe, what you should end with is a healthy/cost optimised cloud model. This model comes from every conversation I had with customers who had, at the crust of all, two questions as they begin their FinOps journey:

- How can I report/invoice the cloud cost-related metrics to stakeholders?

- How can I keep track of and reduce cloud costs?

The FinOps model

To answer the above questions and create a working operational approach, take a baking journey with me as we create and bake the FinOps cake model. To begin, I would like to point out the basic ingredients in the model:

- In my opinion cloud cost, at its heart, is not a finance or accounting problem but a Data Science problem and we should approach it with that mindset. To validate my point, I suggest you as the reader to check out the Cost & Usage Report (CUR) published by AWS, which can run into GBs of data every day depending on the usage and complexity of the AWS environment. The CUR can be utilized to gain visibility into the cloud cost and usage at more granular levels.

- Automation is the key in creating a valid and scalable FinOps practice.

- The FinOps practice is about transforming cloud users and companies to inculcate transparency, innovation, confidence and accountability.

- The FinOps practitioners should be involved in building bridges between various facets, sharing knowledge and bringing best practices into action.

With these basic tenants in mind, let us start creating our cake. I will start with the bottom layer first, which is the automation layer, using the Data Science approach and move on to the middle and top layer.

Bottom layer

The bottom layer is all about automation. Automation is the best layer and the most scalable feature of any model. The tools in this layer, when deployed, should be able to track, trace and notify if there is any discrepancy in the cost and usage of the cloud. These are the few basic ingredients we need to make this layer:

- Cost Monitoring via cost anomaly detection: A self learning ML model running in the background to notify when a spike, non-conforming to the historical cost data, in cost is registered.

- Commitment monitoring: Whenever Reservations and Savings Plans are under-utilized, we want to get notified. For AWS, Cost Explorer restful APIs can be used.

- Budget and forecast monitoring: We would like to get notified if the forecasted cost will breach the set budget for the assigned period. AWS Budgets can be used for basic notifications.

- Resource monitoring: We would also like to track the unused resources, where possible automated, such as under-utilised machines, storage blocks, EIPs etc. In AWS, Config rules give an out-of-the-box solution to track and notify the unused resources. Custom Config rules can be made if more complex tracking is required.

With these basic tools, we have the ability to track the basic and some more complex Cost and Usage KPIs without getting overwhelmed with the amount of data produced every hour of the day. Just to be sure that we are clear with our ingredients: Excel and manual work will not fit and are more likely to ruin the recipe.

This bottom layer has the ability to post notifications generated from any of the monitors and for each kind of notification, a particular remediation process should be laid in place for best results.

Middle Layer

Dashboards, forecasting, reporting, and business KPIs form the middle layer. I will dare to introduce another key term: ‘FinOps Pipeline’, which at the beginning of the pipeline is ingesting data from various sources available. As the ingested data moves upstream in the pipeline, it gets transformed into usable information. The output of the pipeline are dashboards, KPIs, reports and updated forecasts pivoted towards various stakeholders such as the CEO, CFO, CTO, Lead engineers, Program Managers, Finance team, etc.

As explained in the first tenant, FinOps is a data problem, thus we can compare the FinOps pipeline with the data pipeline.Though, while thinking of this approach, we should also be mindful of the security practice of least privilege access.

There are various 3rd party and native tools available which can simulate the behaviour of FinOps Pipelines such as CloudHealth by VMware or CloudCheckr by NetApp (third party tools) and for AWS such as CUDOs dashboard.

Top layer

This is the layer where the FinOps team lives and creates strategies, impactful decisions and drives the practice forward. The FinOps team is involved with providing inputs to Cloud Architects and DevOps team for optimization, and defining FinOps tags and multi-account structure from a FinOps perspective. This layer defines and executes the commitment strategy for savings, cost scans, and remediation steps in consultation with stakeholders. Financial forecasting, budgeting, and refining the overall practice etc. are done in collaboration with various teams and stakeholders. EDP negotiations are part of these tasks.

Last but not the least, this layer is responsible to share the best practices in FinOps and transform the cloud practice by bringing in the culture of responsibility and awareness which in turn helps to grow accountability, which in my view is the greatest achievement.

Thus, we have the three layers:

- Bottom layer, the automation layer

- Middle layer, the FinOps pipeline layer

- Top layer, the FinOps team layer

Some astute readers will point out that the three layers are defined, but what about the cherries? To these readers, I want to thank them firstly for keeping an open mind and taking in the information. The cherries are the secret recipe which the FinOps team can add to make the model their own. But don’t forget to keep some for yourself to celebrate a job well done!

Written by

Mudit Gupta

I am a Machine Learning enthusiast with a background in Aerospace engineering having wide interests in IoT, Data Sciences & Edge computing. Among my hobbies, cooking is at the top followed by gardening. I like to cook Indian, Mediterranean food and share with friends and family.

Contact