Blog

AWS Cost Optimization in 5 Perspectives - Service Rightsizing

Introducing Cloud Financial Management

What makes cloud cost management so difficult? The main reason for the lack of cost control is that cloud costs are complex. Cloud bills can easily grow up to thousands of cost line items. Service billing models are also diverse and complex. Each cloud service has specific characteristics and cost elements, sometimes up to tens of elements on an individual service. Just parsing this huge amount of data is already a hard task. Thanks to the hard work of the billing teams within the cloud providers, we actually have that information parsed already, but not necessarily “consumable” (i.e. not everyone that has access to the data can make sense of it). Cloud Financial Management shows that with a disciplined and structured approach, you can become very successful at managing AWS cost optimization by controlling your expenses.

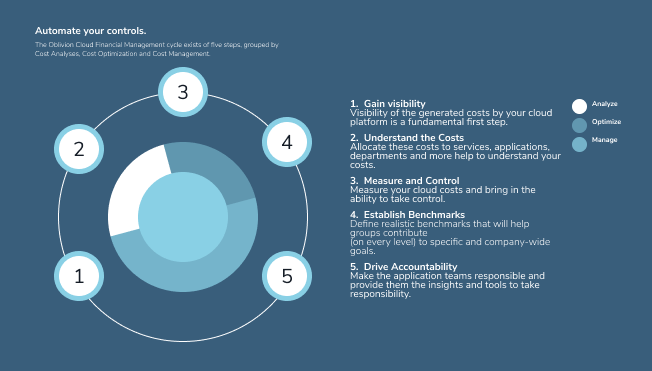

The continuous Cloud Financial Management framework

At Xebia, we have introduced the Cloud Financial Management Cycle (left). This cycle is made with the purpose to implement cloud cost management within any organization. As with all cycles, we emphasize that after walking through it in its entirety, you end up at the start again. In this blog, we will mainly focus on the middle part: Measure & Control and Establishment Benchmarks. This is where cost optimization really takes place and where we further explain our five perspectives on how to do it effectively.

Five perspectives on Cost Optimization on the AWS Platform

In our AWS cost optimization initiatives, we analyse the AWS consumption from five perspectives which together give a pretty complete view on areas that are to be improved. In this blog series, we will take you through the five perspectives around Cost Optimization. However, many of these perspectives and underlying concepts are universally applicable across other cloud platforms.

Perspective 1: Service Rightsizing

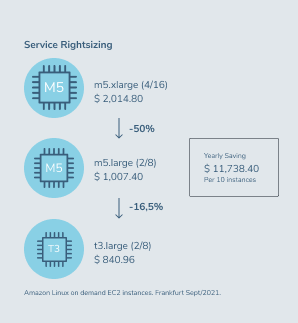

Rightsizing is all about reducing over-capacity of provisioned resources. We have a long history of leading datacenter-to-AWS migrations and we learned that servers can often be provisioned up to 40% smaller than the current allocated capacity in the firm’s datacenter. To put this statement into numbers we explain our actions based on actual AWS services and their prices. Imagine you’re an AWS customer and you employ a m5.xlarge instance (4 vCpu / 16 GB) for your on-demand workloads in Frankfurt. At current prices (2021), the yearly costs for this instance would be $ 2,014.80. These specifications would allow you to comfortably and reliably deploy your workloads, but is this luxury necessary?

This is where rightsizing starts. We analyse the workload that needs to be processed, and based on those results we could determine whether the m5.large (2 vCpu / 8 GB) would actually be sufficient for the same workload. If this is the case, the cost drops by 50% to $ 1,007.40 on a yearly basis for this instance alone. And if it is not a production workload, we could even realize another 16,5% price drop by shifting to a burstable instance type. An example of a reliable and cost-efficient burstable instance would be a t3.large instance in Frankfurt, which costs $ 840.96 yearly.

In this example, we have rightsized a single instance. But, let’s say that you find ten instances within your organization where you can do this exercise: you would save more than $11K a year. And in our experience over the last few years, the majority of the organizations will have much more than ten comparable instances.

Zombie assets

This is another thing to pay attention to when talking about Service Rightsizing. It’s pretty common that organizations have resources running on AWS that they are not aware of. Usually because those resources don’t have an owner anymore or are just not actively in use. But even if you do not know they are there, they will still generate costs. These resources do not only have to be server instances, but can also be storage volumes such as S3 buckets or DynamoDB tables. Make it a common practice in your organisation to regularly review the need for certain resources and decommission them when possible.

Don’t provision for peak capacity

If you have a certain workload that needs to be covered, try to start with a smaller instance type and see if it fits your needs before you scale up to a larger one. Upgrading your AWS compute capacity is flexible and can always be adjusted.

Consider ARM / AMD / Graviton / T-instances

When deciding on your computing technology of choice, look at more efficient instance types besides the regular ones offered by AWS. Take for example the T instances. This line of instances is a great price-efficient alternative compared to the M instances. But also consider other technologies than those native to AWS. AMD EPYC instances are incredibly well-optimized and could lead to another 10% cost savings compared to the AWS EC2 instance processors.

Look at provisioned capacity regularly.

For example, look at DynamoDB provisioned read/write capacity units. It’s a good practice to re-evaluate this after a certain amount of time to make sure that whatever has been provisioned at a certain time is still accurate.

How to optimize your AWS costs even further

Do you have any further questions around Cost Optimization? Feel free to contact us. Ready to learn more about the five perspectives? Read up on Perspective 2: Purchase Model Optimization in the second blog. Need this knowledge on the go, or do you want to share it with a colleagues? Download the full original whitepaper here: ‘AWS Cost Optimization in Five Perspectives’.

Written by

Michel Zitman

I am passionate about cloud computing, but specially about what it enables. So as a cloud consultant and Oblivion’s Cloud Financial Management Practice lead I wholeheartedly believe that introducing and developing CFM/FinOps helps organizations to efficiently use the cloud, and thus contributes to the positive impact that technology has on our lives. But good food, wine and books are just as important! Needless to say, family and friends even more.

Our Ideas

Explore More Blogs

Contact