Adopting public cloud isn't always easy and usually a struggle to do it right. In the past years I've gained some experience in my daily life as a Cloud Consultant at Xebia while helping companies going to public cloud. I think it's time to share some part of this knowledge with you.

Just another datacenter

During the start of "cloud" projects people quite often say: "I can host my application on a virtual machine in public cloud". Yes, obviously you can do that. But most likely doing it will not bring the change your business is looking for. After the migration you will still be busy running, maintaining and debugging your application. It's not worth spending time and money on a lift-and-shift project, since the economical benefits are at most 10% and your on-premises challenges like hardware issues, scaling issues, etc., etc,. will be replaced by cloud related challenges such as storing state, security, dynamic addressing, knowledge etc., etc. Most often we call this "your mess for less".

Do: Look at services provided by the cloud service provider, don't think public cloud is just an extension of your on-premises data center. Cloud-native services will bring you the most value when it comes to focus, maturity, scalability, security and innovation. Use higher level abstracted services such as Function as a Service (FaaS), managed database solutions, queuing services, etc., etc.

Tools over services

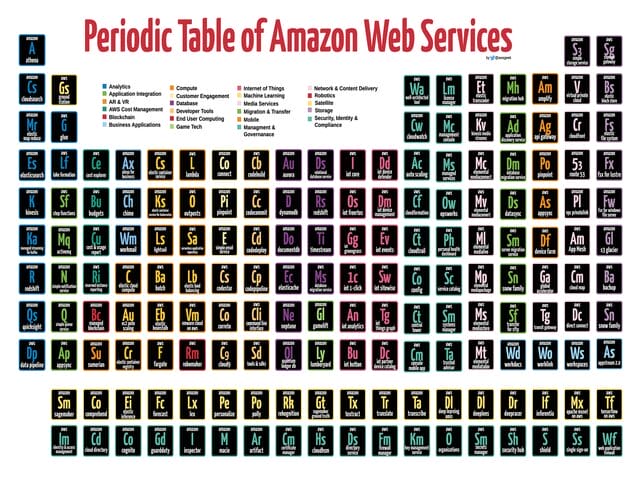

The real power of a cloud service provider is the number and variety of service they provide. If you have a brief look at the Periodic table of AWS you will notice around 170 services, from compute to game tech, from mobile to blockchain and from augmented reality & virtual reality to security. There is almost a service for everything and more are coming. According to the big tech giants this is just the beginning.

In my daily life as a cloud consultant I often notice people pushing for their own tools rather than cloud service, driven by reasons such as: not being familiar with cloud services, lack of trust in service, force of habit, job protection or false promises of tool vendors. This mistake is most often made in the area where people tend to solve issues with tools rather than focussing on the objectives. Such as encryption, secrets management, monitoring, vulnerability scanning, etc., etc.

Do: use cloud-services in your architecture, in most cases there is a service which covers almost all your requirements. If you cannot find a suitable service reconsider your requirements, most likely you are doing something terribly wrong. Remember the high burden of selecting, purchasing and running your own tools. You must have very good reason to do so.

Applying generic security controls

The risks identified for running applications in public cloud is somewhat comparable with on-premises workloads, with a few additions. However the risks are similar, the solution used to mitigate the risks shouldn't necessarily be the same between public cloud and on-premises. Preferably not. In most cases we use monolithic and expensive solutions to solve our on-premises challenges, like firewall and proxy, key management and remote access appliances.

Without cloud knowledge your first response would probably be: let's use a virtualised version of this appliance so that we can have a single pane of glass on our security landscape and reuse of specific knowledge. Sorry, you are wrong. You will face challenges implementing this due to the lack of integration, orchestration, scalability, availability, elasticity, high costs, etc., etc.

Do: rewrite your requirements so that they define the output ('what'), let cloud specialists design the 'how' and allow them to come up with new ideas. For example the requirement "Customer data should be protected at-rest and in-transit" will give you are more valuable native solution based on cloud services than when your requirements are specifying which already known tool to use.

Agnostic architectures

This is my favourite topic. I do see this as the biggest marketing slogan currently used. It's no surprise that people think this myth is really true. For years vendors worked on their reputation by increasing license costs year over year, forcing you to switch to newer products by having unpredictable product lifecycles etc., etc. This has led to something we now call "avoid vendor lock-in". Lots of vendors are trying to sell you tools or solutions which fulfil the promise that you actually can avoid the vendor lock-in. "If my cloud vendor increases the prices I can use my workload mobility to run my application on a cheaper vendor within minutes". Sounds good, isn't it? I have a little surprise for you: every technical decision will lead to a vendor lock-in.

Designing your application to be fully agnostic will not bring you anywhere, except from running out of time and budget for sure. The likelihood of applications moving from one platform to another platform because of high costs or sudden price increases is very small. Those risks can be mitigated in most cases. Known exceptions are Dropbox and Netflix, they've (partly) left public cloud due to extremely high volumes. I don't expect your applications, with respect, will ever reach that scale. You can possibly never create a decent business case for workload mobility when comparing the pros and cons of cloud-native vs agnostic (common denominator).

Do: make sure your organisation is prepared. Instead of having discussions around the fear of vendor lock-in, discuss the switching costs of your application. For example: We can build this application on cloud service provider X in four sprints, most likely we can build the same on cloud service provider Y within same amount of time or even faster since the speed innovation is massive and the major cloud service providers are following each other.

Building a new platform

It's very common that people think that public cloud is just a pool of unlimited compute, network and storage resources and that you've to build your own platform on top of that. Yes, public cloud provides basic resources as Infrastructure is a Service, but that is not the type of service you probably want to use. The biggest benefit of public cloud is that cloud service providers are building more and more services for you. Why run your own power plant while you can also get a commodity such as electricity just from a cable running to your building?

I will not make much friends when I say that IT is commodity. The years where scarce skills like putting together a server, mounting it in the datacenter and installing Linux and Oracle database on it are gone. Today everyone with a little bit of technical knowledge, a credit card and a browser can provision a database. IT is commodity.

The real difference is made in the business logic you run on the commodity. The more focus you can have on building logic to enable your business, the better your company can distinguish itself. Don't make the mistake of thinking your company has different IT requirements than all the other companies on this globe. It doesn't make sense to spend time and money on building a platform when your cloud service provider already built and keeps building something for you. Running a platform like OpenShift or Kubernetes on public cloud is most often a bad idea since it will drift away from the core principles of the cloud service provider (e.g. IAM and networking controls).

Do: use the platform capabilities provided by the cloud service provider. Don't think you can do it better. You simply don't have the scale they have. Not when it comes to the budget and especially not the knowledge.

End state architecture

The pace of innovation in public cloud is fast, really fast. The number of new services and features launched annually is massive and increasing each year. If you can maintain status quo for a number of years you are doing something wrong. New features can lead to new insights on performance, scalability, security and costs. The pay-per-use model allow you to switch, without breaking any contracts. Agility.

Due to the economics in public cloud the length of lifecycle for workloads is also very flexible. In more traditional environments it was very common to have long-term agreements (4-8 years contracts). With cloud most pricing models are based on pay-per-use model and often billed per minute, second or sub-second. Billing is stopped immediately after you terminate the resource. Which allow you to have shorter lifespans for marketing campaigns, and also very long lifespans for archive purposes.

Do: Consider your architecture as a rolling release which should continuously improve. Don't be afraid to change your design or opinion, based on the ever changing world of public cloud.

Disclaimer: any resemblance to actual companies is purely coincidental. Do not worry; the challenges described here are generic and more often than not the case.

Written by

Steyn Huizinga

As a cloud evangelist at Oblivion, my passion is to share knowledge and enable, embrace and accelerate the adoption of public cloud. In my personal life I’m a dad and a horrible sim racer.

Our Ideas

Explore More Blogs

Contact