Product Innovation in the age of Connected Products and Services

‘Perfection is not a destination; it is a continuous journey that never ends’ – Brian Tracy

“In a dynamic business environment, which demands fast and frequent delivery of software, it is difficult to test for every possible use-case scenario in pre-production. Testing is great for detecting and fixing defects that we expect to occur, whereas, the defects that we uncover in production are often complete surprises.

Recently, British Airways was forced to cancel 100s of flights, as a catastrophic IT systems failure caused severe disruption to its operations. Heathrow airport, the hub of British air operations and one of the busiest airports in the world, witnessed unprecedented chaos and caused severe inconvenience to tens of thousands of travelers on a busy holiday weekend, as the airline couldn’t restart its flight operations for nearly three days. This disaster not only caused significant financial losses, but also severely dented the image of British airways. This is precisely the kind of nightmarish scenario and IT disaster that every business wants to prevent.

Digital enterprises, connected landscape, and demand for 100% uptime

As a perfect storm of emerging technologies and disruptive business paradigms such as Industry 4.0 are accelerating the pace of digital transformation, businesses across virtually every major domain are rapidly reinventing themselves as digital enterprises. Perhaps the greatest impact of this transformation is reflected in the demand for continuous upkeep of software and systems to support an always on or on-demand environment in a connected landscape. In operational terms this has a huge impact, as businesses must reorient their technology operations and QA processes to support 100% uptime.

Changing facets of Software Quality Assurance (QA)

As software development evolved into an iterative process, software QA has also kept pace and developed into an integrated and continuous process to support rapid, and incremental delivery. Thanks to the phenomenal growth in connected products, devices, and multiplicity of browsers, the complexity of the software applications has also increased exponentially, giving rise to the need for comprehensive testing and quality assurance covering all functional and non-functional(usability, reliability, performance and security) aspects. As the industry is rapidly moving towards cloud-based SaaS deployments, test automation, regression testing, and Devops have not only become the norm, but are viewed as indispensable part of QA to ensure the upkeep of always on systems.

Critical deployments and nightmare of IT disasters

Even with a well-established pre-production QA process, in a fast-paced environment with unknown/dynamic variables, there is always a chance that the system could behave in an unanticipated manner, and result in an IT disaster in the production environment. As no business wishes to risk a catastrophic software failure, and especially in critical deployments which demand 100% SLAs, they try to test the system behavior under severe load or stress, but very often find it a major challenge to recreate the production environment.

Testing in Production (TiP)

The emerging QA practice of Testing in Production(TiP) is a logical extension of software QA, and can be an invaluable tool to ensure that the software/system performs as expected, and prevent IT disasters from happening. TiP is not a futuristic myth, but a practice that is already being used successfully by digital pioneers like Amazon, Facebook, and Google. If traditional QA is all about testing the software in pre-production, i.e. prior to release, TiP is about a set of QA methodologies for monitoring the software and system performance in live environment, with real users and production data. The key advantages of TiP are:

- Testing with production data

- Validating the business logic and customer experience in a live environment

- Testing, not simulating, disaster recovery

It is important to note that TiP is not the last step in the software QA process, but should be viewed as a first step towards deployment.

Different types of TiP

Some of the popular methodologies used for TiP are briefly described below:

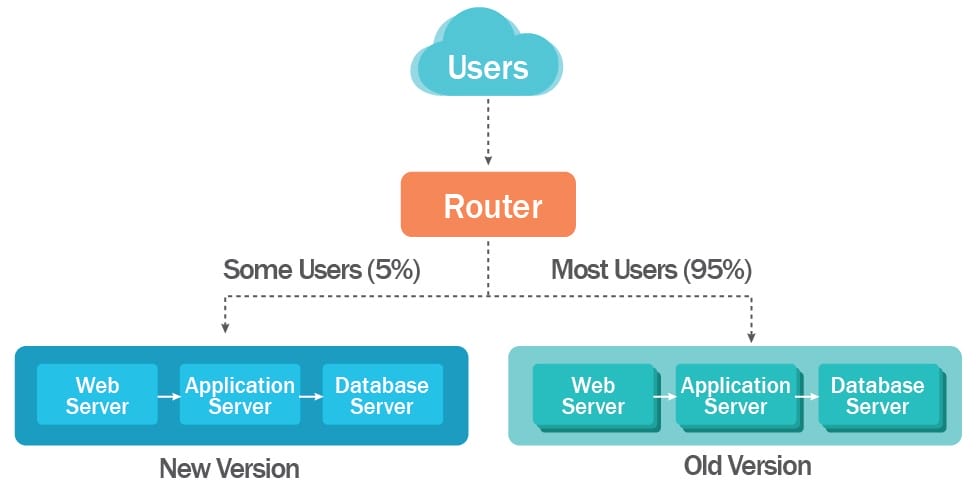

Canary Testing , also known as Canary deployment, it is a technique to test a new software with a small subset of live users in production environment. If the system performance and results are as per expectations, the software is released for all the users. If not, fixes are rolled in before the feature is rolled out to wider audience.

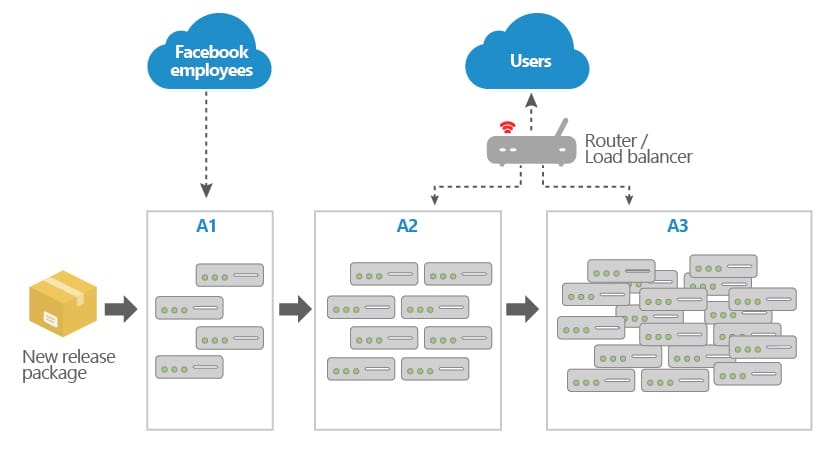

Facebook’s release process is a great example of successful usage of canary deployments. As many of you are aware, Facebook does multiple releases in a day. Facebook uses a testing strategy with multiple canaries, the first one being visible only to their internal employees and having all the feature toggles turned on so that they can detect problems with new features early.

Canary testing is an effective strategy for incremental rollout and validation of customer experience and business logic in a live environment.

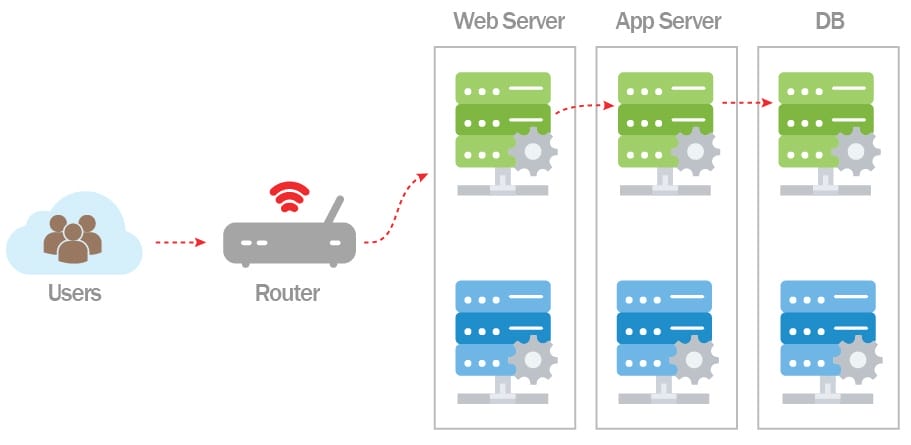

Blue-Green Deployment is a technique in which you create two live environments, as identical as possible. If let’s say the current version is live in Blue, then the new version is released to the Green environment. If the software and system performance are stable and as per expectations, the traffic is routed to the Green environment and the Blue environment is made idle. Conceptually, this is like canary deployment (except the fact that you are not testing with live users), and it also facilitates a rapid rollback from the new to old version.

A/B Split Testing is a popular mode of experimental testing in which two versions of a web page or an app, are deployed and their performance evaluated. The version that performs betteris taken forward and the other is discarded. This is an effective technique to test variants of user experience, website design, and even product descriptions and headlines. Successful retailers such as Amazon use A/B testing widely to improve the UX and conversion rate of their services. Some businesses also use multi-variate testing, which is an advanced form of A/B testing, the key difference being variation in multiple elements between the versions to see which combination performs better.

Synthetic User Testing, also known as synthetic monitoring is a sophisticated performance/application monitoring technique that enables testing the user experience with simulated users. This enables testing different attributes, measure their performance without real or live users. This is a popular strategy for testing new features and services before real users come in.

Real User Monitoring (RUM), also known as passive monitoring is a technique used to record the actual user experience/activityin real-time with the help of a non-obtrusive monitoring tool. The data collected is analyzed to determineperformance of the website, application, or online service, identify potential areas for improvement as well as bottlenecks. In contrast to synthetic monitoring (which is executed with scripts and simulated users), real user monitoring is unscripted and is done on real users. A combination of synthetic and real user monitoring can be quite effective in providing a complete view of the system, application performance, and user experience.

Fault Injection and Recovery testing are techniques that introduce a fault or failure into the production environment to test the system performance, error handling capability, and recovery process. These are often used in combination with stress testing to test robustness and effectiveness of the designed disaster recovery process.

In my experience, even businesses with sophisticated software assurance capabilities tend to overlook the need for Testing in Production. Successful digital unicorns like Facebook, Amazon, and Google have demonstrated the effectiveness of TiP in continuous upkeep of defect-free software. Any digital enterprise that aims to deliver top-notch customer experience and would like to avoid the nightmare of IT disasters, must make TiP an integral part of their quality assurance.